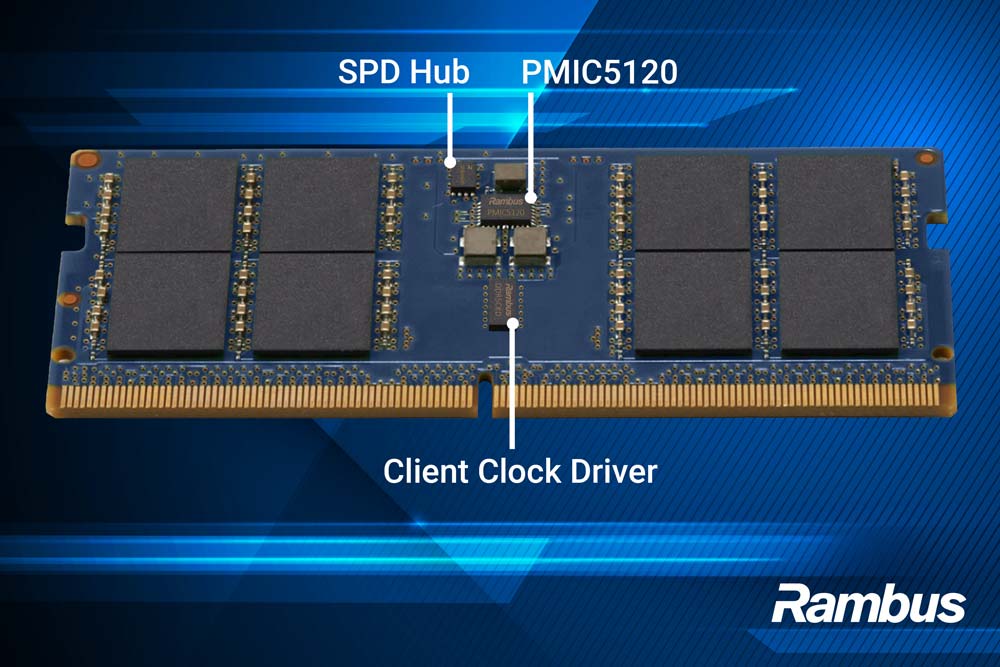

The Rambus Client Clock Driver (CKD) enables DDR5 CUDIMMs, CQDIMMs and CSODIMMs to operate at state-of-the-art data rates of up to 9600 Megatransfers per second (MT/s) and deliver breakthrough performance in next-generation AI PCs.

Rambus Enables Next-Generation AI PC Memory with Complete Client Chipset for CUDIMM and CSODIMM Modules

Industry’s fastest DDR5 Client Chipset, with Gen2 Client Clock Driver (CKD02), PMIC5120 and SPD Hub, offers breakthrough performance of up to 9600 MT/s

- Enables advanced agentic AI, gaming and content creation workloads in future generation PC desktops and laptops

- Supports high-bandwidth, high-capacity CUDIMM, CQDIMM and CSODIMM memory module form factors

- Extends Rambus comprehensive memory module chipset offerings for server to client platforms

SAN JOSE, Calif. – May 26, 2026 – Rambus Inc. (NASDAQ: RMBS), a premier chip and silicon IP provider making data faster and safer, today announced its complete DDR5 9600 Client Memory Module Chipset for high-performance CUDIMM, CQDIMM and CSODIMM modules in future generation AI PCs. The chipset includes the new Gen2 Client Clock Driver (CKD02), delivering breakthrough performance with support for PC memory module operation of up to 9600 MT/s, Power Management IC (PMIC5120) and Serial Presence Detect Hub (SPD Hub).

With the rise of agentic AI, PCs now plan, execute, and adapt workflows in real time. These workloads require persistent context, concurrent processing, and continuous data movement between the processor and system memory requiring significant increases in both bandwidth and capacity. At the same time, scaling DDR5 memory beyond 6400 MT/s introduces new technical challenges, including signal degradation, clock jitter, and timing instability. To address these challenges, the industry is transitioning to clocked memory modules, including CUDIMM and CQDIMM for desktops and CSODIMM for laptops, which incorporate an on-module client clock driver (CKD) to condition and redistribute the clock signal.

The new Rambus DDR5 9600 Client Chipset provides a complete solution for clocked DDR5 modules operating from 8000 to 9600 MT/s. Designed for performance and scalability, the chipset supports next-generation AI PCs, notebooks, and workstations. By addressing signal integrity, power delivery, and system coordination at the module level, Rambus simplifies the design and deployment of high-performance memory module solutions.

“Agentic workloads are fundamentally more memory-hungry, driving the need for higher memory bandwidth, greater capacity, and improved efficiency in AI-enabled PCs,” said Rami Sethi, SVP and general manager of Memory Interface Chips at Rambus. “Our DDR5 9600 Client Chipset, featuring the Gen2 Client Clock Driver, delivers the performance foundation needed to enable this new era of intelligent, high-performance client systems for AI-driven productivity, next-generation gaming and professional content creation.”

“As AI-driven workloads become increasingly pervasive across client devices, memory subsystem innovation will be key to unlocking their full potential,” said Jeff Janukowicz, research vice president at IDC. “To meet growing performance demands, the industry is transitioning to clocked memory architectures such as CUDIMM and CSODIMM, which are designed to address signal integrity and timing challenges at higher data rates. Complete chipset solutions that deliver stable, high-speed operation will play a critical role in accelerating the adoption of next-generation AI PCs.”

The Rambus DDR5 9600 Client Chipset supporting high-bandwidth, high-capacity, clocked client memory modules and includes:

- Gen2 Client Clock Driver retimes, conditions and distributes the clock sent from the processor to the DRAM devices on the DIMM

- PMIC5120 efficiently steps down the system voltage supply to the voltage levels needed to power the DRAM and all other active chips on the module

- SPD Hub enables communication of module identification, configuration, and telemetry

More Information

Learn more about the Rambus DDR5 9600 Client Memory Module Chipset at: https://www.rambus.com/memory-interface-chips/ddr5-client-dimm-chipset/

About Rambus Inc.

Rambus delivers industry-leading chips and silicon IP for the data center and AI infrastructure. With over three decades of advanced semiconductor experience, our products and technologies address the critical bottlenecks between memory and processing to accelerate data-intensive workloads. By enabling greater bandwidth, efficiency and security across next generation computing platforms, we make data faster and safer. For more information, visit rambus.com.

Forward-looking statements

Information set forth in this press release, including statements as to Rambus’ outlook and financial estimates and statements as to the expected timing and effects of Rambus products, constitute forward-looking statements within the meaning of the safe harbor provisions of the Private Securities Litigation Reform Act of 1995.

These statements are based on various assumptions and the current expectations of the management of Rambus and may not be accurate because of risks and uncertainties surrounding these assumptions and expectations. Factors listed below, as well as other factors, may cause actual results to differ significantly from these forward-looking statements. There is no guarantee that any of the events anticipated by these forward-looking statements will occur, or what effect they will have on the operations or financial condition of Rambus. Forward-looking statements included herein are made as of the date hereof, and Rambus undertakes no obligation to publicly update or revise any forward-looking statement unless required to do so by federal securities laws.

Major risks, uncertainties and assumptions include, but are not limited to: any statements regarding anticipated operational and financial results; any statements of expectation or belief; other factors described under “Risk Factors” in Rambus’ Annual Report on Form 10-K and Quarterly Reports on Form 10-Q; and any statements of assumptions underlying any of the foregoing. It is not possible to predict or identify all such factors. Consequently, while the list of factors presented here is considered representative, no such list should be considered to be a complete statement of all potential risks and uncertainties.

Rambus Introduces PCIe® 7.0 Switch IP with Time Division Multiplexing for Scalable AI and Data Center Infrastructure

Rambus PCIe® 7.0 Switch IP with Time Division Multiplexing enables efficient, scalable PCIe fabrics that optimize link utilization and reduce system complexity for scale up and scale out of distributed AI clusters and high-performance computing networks

- Supports bandwidth scaling, low latency, and efficient data movement for AI, cloud, and HPC systems

- Increases link utilization through intelligent traffic multiplexing, enabling simpler architectures and scalable disaggregated and pooled compute designs

- Extends the industry-leading Rambus PCIe IP portfolio which spans switches, controllers, retimers, and debug solutions to support next‑generation AI infrastructure

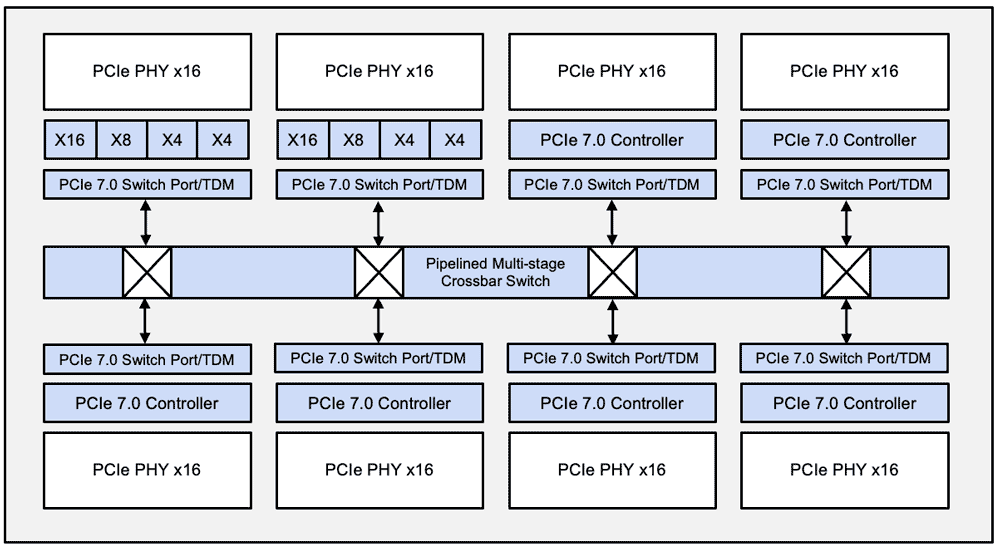

Rambus PCIe 7.0 Switch IP with Time Division Multiplexing

SAN JOSE, Calif. — May 5, 2026 — Rambus Inc. (NASDAQ: RMBS), a premier chip and silicon IP provider making data faster and safer, today announced the Rambus PCIe® 7.0 Switch IP with Time Division Multiplexing (TDM), a new addition to its advanced interconnect IP portfolio designed to address the rapidly escalating bandwidth, latency, and scalability requirements of AI, cloud, and high-performance computing (HPC) systems.

As AI infrastructure grows in scale and architectural complexity, system designers are increasingly challenged to move massive volumes of data efficiently across CPUs, GPUs, accelerators, and NVMe storage. The Rambus PCIe 7.0 Switch IP with TDM is architected to help meet these demands by enabling more flexible and efficient utilization of PCIe links, supporting emerging disaggregated and pooled compute architectures while maintaining low latency and deterministic performance.

Rambus PCIe 7.0 Switch IP with TDM Optimized for Next-Generation AI and Data Center SoCs

Built on the PCIe 7.0 specification, the Rambus newest switch IP is optimized for next‑generation AI and data center SoCs that require extreme bandwidth density, advanced traffic management, and seamless scalability. By incorporating TDM capabilities, the switch enables designers to intelligently schedule and multiplex traffic across shared links, helping maximize fabric utilization while supporting diverse workload profiles, from large‑scale AI training to latency‑sensitive inference and data movement.

“The acceleration of AI is fundamentally reshaping system architectures, and it’s no longer sufficient to simply add more lanes or more endpoints,” said Simon Blake‑Wilson, senior vice president and general manager of Silicon IP at Rambus. “With our PCIe 7.0 Switch IP with TDM, Rambus is giving system architects a new degree of freedom to scale bandwidth efficiently and deterministically, while reducing complexity and improving overall system utilization. This is a critical enabler for scale up and scale out of the next wave of advanced AI clusters and HPC networks.”

“AI infrastructure is increasingly defined by how efficiently data can move between heterogeneous compute and memory resources,” said Jeff Janukowicz, VP, Semiconductors and Enabling Technologies at IDC. “Advanced PCIe switching technologies that improve link utilization and enable flexible traffic orchestration will be key to building scalable, cost‑effective AI platforms as next‑generation interconnect technology evolves.”

Rambus PCIe 7.0 Switch IP with TDM Expands Industry-Leading PCIe IP Portfolio

The Rambus PCIe 7.0 Switch IP with TDM is designed to integrate seamlessly into leading-edge ASIC platforms and complements Rambus’ broader PCIe 7.0 IP portfolio, which includes controllers, retimers, and debug solutions. Together, these IP offerings help customers accelerate time‑to‑market while addressing the demanding performance, power, and reliability requirements of modern AI infrastructure.

The Rambus PCIe 7.0 Switch IP with TDM reinforces the company’s long‑standing leadership in high‑speed interface IP and its commitment to delivering differentiated interconnect technologies that help customers solve the most challenging problems in AI, cloud, and HPC Infrastructure.

More Information:

Learn more about the Rambus PCIe 7.0 Switch IP with TDM and Rambus’ industry-leading family of PCIe solutions at www.rambus.com/interface-ip/pci-express/.

About Rambus Inc.

Rambus delivers industry-leading chips and silicon IP for the data center and AI infrastructure. With over three decades of advanced semiconductor experience, our products and technologies address the critical bottlenecks between memory and processing to accelerate data-intensive workloads. By enabling greater bandwidth, efficiency and security across next generation computing platforms, we make data faster and safer. For more information, visit rambus.com.

Forward-looking statements

Information set forth in this press release, including statements as to Rambus’ outlook and financial estimates and statements as to the expected timing and effects of Rambus products, constitute forward-looking statements within the meaning of the safe harbor provisions of the Private Securities Litigation Reform Act of 1995.

These statements are based on various assumptions and the current expectations of the management of Rambus and may not be accurate because of risks and uncertainties surrounding these assumptions and expectations. Factors listed below, as well as other factors, may cause actual results to differ significantly from these forward-looking statements. There is no guarantee that any of the events anticipated by these forward-looking statements will occur, or what effect they will have on the operations or financial condition of Rambus. Forward-looking statements included herein are made as of the date hereof, and Rambus undertakes no obligation to publicly update or revise any forward-looking statement unless required to do so by federal securities laws.

Major risks, uncertainties and assumptions include, but are not limited to: any statements regarding anticipated operational and financial results; any statements of expectation or belief; other factors described under “Risk Factors” in Rambus’ Annual Report on Form 10-K and Quarterly Reports on Form 10-Q; and any statements of assumptions underlying any of the foregoing. It is not possible to predict or identify all such factors. Consequently, while the list of factors presented here is considered representative, no such list should be considered to be a complete statement of all potential risks and uncertainties.

Press Contact:

Cori Pasinetti

Rambus Corporate Communications

t: (650) 309-6226

[email protected]

Rambus Appoints Sumeet Gagneja as Chief Financial Officer

Industry-proven finance leader brings deep semiconductor, data center and AI-driven computing ecosystem expertise to support long-term profitable growth

SAN JOSE, Calif., April 29, 2026 — Rambus Inc. (NASDAQ: RMBS) today announced the appointment of Sumeet Gagneja as senior vice president and chief financial officer, effective April 29, 2026. Mr. Gagneja joins Rambus with more than two decades of financial and operational leadership across the semiconductor, data center, and AI-driven computing ecosystem. Mr. Gagneja will oversee the company’s global finance organization, including financial strategy, capital allocation, and investor engagement, reporting to president and chief executive officer Luc Seraphin.

“Sumeet is a highly experienced finance leader with deep knowledge of the semiconductor and data center ecosystem,” said Luc Seraphin, president and chief executive officer of Rambus. “He brings a strong track record of helping companies scale with disciplined execution and a focus on value creation, and will be an outstanding addition to the leadership team as we continue to drive long-term profitable growth.”

Mr. Gagneja most recently served as divisional CFO for AMD’s Data Center segment. Previously, he was CFO of Western Digital’s Flash business and held senior finance leadership roles at Xilinx, Innovium, Maxim Integrated, Avago, and Intel. Across these roles, he supported capital allocation, mergers and acquisitions, operational planning, and investor and analyst engagement, bringing a disciplined and execution-focused approach to scaling complex technology businesses.

“I am excited to join Rambus and support its continued progress,” said Mr. Gagneja. “The company has a strong foundation, a differentiated portfolio, and a clear opportunity to deliver long-term profitable growth. I look forward to working with the leadership team to help drive disciplined execution and create durable value for shareholders.”

Gagneja holds a Master of Business Administration with high distinction from the University of Michigan Ross School of Business and a master’s degree in mechanical engineering from Wayne State University. He is a California Certified Public Accountant.

About Rambus Inc.

Rambus delivers industry-leading chips and silicon IP for the data center and AI infrastructure. With over three decades of advanced semiconductor experience, our products and technologies address the critical bottlenecks between memory and processing to accelerate data-intensive workloads. By enabling greater bandwidth, efficiency and security across next‑generation computing platforms, we make data faster and safer. For more information, visit rambus.com.

Forward-Looking Statements

This release contains forward-looking statements under the Private Securities Litigation Reform Act of 1995, including those relating to Rambus’ expectations regarding business opportunities, the Company’s ability to deliver long-term, profitable growth, product and investment strategies, and the Company’s outlook and financial guidance for the second quarter of 2026 and related drivers, and the Company’s ability to effectively manage market challenges. Such forward-looking statements are based on current expectations, estimates and projections, management’s beliefs and certain assumptions made by the Company’s management. Actual results may differ materially. The Company’s business generally is subject to a number of risks which are described more fully in Rambus’ periodic reports filed with the Securities and Exchange Commission. The Company undertakes no obligation to update forward-looking statements to reflect events or circumstances after the date hereof.

Contact:

Nicole Noutsios

Rambus Investor Relations

(510) 315-1003

[email protected]

Rambus Reports First Quarter 2026 Financial Results

- Achieved strong Q1 results, delivering quarterly product revenue of $88.0 million, up 15% year over year

- Generated strong quarterly cash from operations of $83.2 million

- Expanded product and IP offerings for next-generation AI platforms, including the LPDDR5X SOCAMM2 server module chipset, the industry’s fastest HBM4E memory controller IP

SAN JOSE, Calif. – April 27, 2026 – Rambus Inc. (NASDAQ:RMBS), a provider of industry-leading chips and IP making data faster and safer, today reported financial results for the first quarter ended March 31, 2026. GAAP revenue for the first quarter was $180.2 million, licensing billings were $70.8 million, product revenue was $88.0 million, and contract and other revenue was $22.6 million. The Company also generated $83.2 million in cash from operating activities in the first quarter.

“Rambus opened 2026 with a solid first quarter, delivering financial results in line with guidance and generating strong cash from operations,” said Luc Seraphin, president and chief executive officer of Rambus. “The growth of AI inference and agentic workloads in the data center continues to drive demand for higher memory bandwidth, efficient data movement, and scalable connectivity. With expanding offerings across chips and IP, Rambus is well positioned to support next-generation AI platforms and drive profitable long-term growth.”

| GAAP | Non-GAAP (1) | |||||||||||||||

| Quarterly Financial Review | Three Months Ended March 31, |

Three Months Ended March 31, |

||||||||||||||

| (In millions, except for percentages and per share amounts) | 2026 | 2025 | 2026 | 2025 | ||||||||||||

| Revenue | ||||||||||||||||

| Product revenue | $ | 88.0 | $ | 76.3 | $ | 88.0 | $ | 76.3 | ||||||||

| Royalties | 69.6 | 74.0 | 69.6 | 74.0 | ||||||||||||

| Contract and other revenue | 22.6 | 16.4 | 22.6 | 16.4 | ||||||||||||

| Total revenue | 180.2 | 166.7 | 180.2 | 166.7 | ||||||||||||

| Cost of product revenue | 33.7 | 30.6 | 33.6 | 30.4 | ||||||||||||

| Cost of contract and other revenue | 1.1 | 0.6 | 1.1 | 0.6 | ||||||||||||

| Amortization of acquired intangible assets (included in total cost of revenue) | 1.7 | 1.7 | — | — | ||||||||||||

| Total operating expenses | 81.9 | 70.7 | 69.9 | 59.4 | ||||||||||||

| Operating income | $ | 61.8 | $ | 63.1 | $ | 75.6 | $ | 76.3 | ||||||||

| Operating margin | 34% | 38% | 42% | 46% | ||||||||||||

| Net income | $ | 59.9 | $ | 60.3 | $ | 69.3 | $ | 64.6 | ||||||||

| Diluted net income per share | $ | 0.55 | $ | 0.56 | $ | 0.63 | $ | 0.59 | ||||||||

| Licensing billings (operational metric) (2) | $ | 70.8 | $ | 73.3 | $ | 70.8 | $ | 73.3 | ||||||||

- See “Supplemental Reconciliation of GAAP to Non-GAAP Results” table included below. Note that the applicable non-GAAP measures are presented and that revenue and cash provided by operating activities are solely presented on a GAAP basis. Additionally, licensing billings is presented as an operational metric, which is defined below.

- Licensing billings is an operational metric that reflects amounts invoiced to our licensing customers during the period, as adjusted for certain differences relating to advanced payments for variable licensing agreements.

GAAP revenue for the quarter was $180.2 million, which was above the mid-point of the Company’s expectations. The Company also had licensing billings of $70.8 million, product revenue of $88.0 million, and contract and other revenue of $22.6 million. The Company had total GAAP cost of revenue of $36.5 million and operating expenses of $81.9 million. The Company also had total non-GAAP operating expenses of $104.6 million (including non-GAAP cost of revenue of $34.7 million). The Company had GAAP diluted net income per share of $0.55 and non-GAAP diluted net income per share of $0.63. The Company’s basic share count was 108 million shares and its diluted share count was 110 million shares.

Cash, cash equivalents, and marketable securities as of March 31, 2026 were $786.1 million, an increase of $24.3 million as compared to December 31, 2025, mainly due to $83.2 million in cash provided by operating activities, partially offset by $38.4 million payments of taxes related to net share settlement of equity awards and $17.0 million paid for capital expenditures.

2026 Second Quarter Outlook

The Company will discuss its full revenue guidance for the second quarter of 2026 during its upcoming conference call. The following table sets forth the second quarter outlook for other measures.

| (In millions) | GAAP | Non-GAAP (1) | ||

| Licensing billings (operational metric) (2) | $76 – $82 | $76 – $82 | ||

| Product revenue (GAAP) | $95 – $101 | $95 – $101 | ||

| Contract and other revenue (GAAP) | $19 – $25 | $19 – $25 | ||

| Total operating costs and expenses | $131 – $127 | $114 – $110 | ||

| Interest and other income (expense), net | $7 | $7 | ||

| Diluted share count | 110 | 110 |

- See “Reconciliation of GAAP Forward-Looking Estimates to Non-GAAP Forward-Looking Estimates” table included below.

- Licensing billings is an operational metric that reflects amounts invoiced to our licensing customers during the period, as adjusted for certain differences relating to advanced payments for variable licensing agreements.

For the second quarter of 2026, the Company expects licensing billings to be between $76 million and $82 million. The Company also expects royalty revenue to be between $72 million and $78 million, product revenue to be between $95 million and $101 million, and contract and other revenue to be between $19 million and $25 million. Revenue is not without risk and achieving revenue in this range will require that the Company sign customer agreements for various product sales and solutions licensing, among other matters.

The Company also expects operating costs and expenses to be between $131 million and $127 million. Additionally, the Company expects non-GAAP operating costs and expenses to be between $114 million and $110 million. These expectations also assume a tax rate of 16% and a diluted share count of 110 million, and exclude stock-based compensation expense of $15.7 million and amortization of acquired intangible assets of $1.5 million.

Conference Call

The Company’s management will discuss the results of the quarter during a conference call scheduled for 2:00 p.m. PT today. The call will be audio, slides will be available online at investor.rambus.com, and a replay will be available for the next week at the following numbers: (800) 770-2030 (domestic) or (+1) 609-800-9909 (international) with ID# 9039474.

Non-GAAP Financial Information

In the commentary set forth above and in the financial statements included in this earnings release, the Company presents the following non-GAAP financial measures: cost of product revenue, operating expenses, operating income, operating margin, net income and diluted net income per share. In computing each of these non-GAAP financial measures, the following items were considered as discussed below: stock-based compensation expense, acquisition-related costs and retention bonus expense, amortization of acquired intangible assets, facility closure costs, income tax adjustment, and certain other one-time adjustments. The non-GAAP financial measures disclosed by the Company should not be considered a substitute for, or superior to, financial measures calculated in accordance with GAAP, and the financial results calculated in accordance with GAAP and reconciliations from these results should be carefully evaluated. Management believes the non-GAAP financial measures are appropriate for both its own assessment of, and to show investors, how the Company’s performance compares to other periods. The non-GAAP financial measures used by the Company may be calculated differently from, and therefore may not be comparable to, similarly titled measures used by other companies. A reconciliation from GAAP to non-GAAP results is included in the financial statements contained in this release.

The Company’s non-GAAP financial measures reflect adjustments based on the following items:

Stock-based compensation expense. These expenses primarily relate to employee stock purchase plans, and employee non-vested equity stock and non-vested stock units. The Company excludes stock-based compensation expense from its non-GAAP measures primarily because such expenses are non-cash expenses that the Company does not believe are reflective of ongoing operating results. Additionally, given the fact that other companies may grant different amounts and types of equity awards and may use different valuation assumptions, excluding stock-based compensation expense permits more accurate comparisons of the Company’s results with peer companies.

Acquisition-related costs. These expenses include all direct costs of certain acquisitions and the current periods’ portion of any retention bonus expense associated with the acquisitions. The Company excludes these expenses in order to provide better comparability between periods as they are related to acquisitions and have no direct correlation to the Company’s operations.

Amortization of acquired intangible assets. The Company incurs expenses for the amortization of intangible assets acquired in acquisitions. The Company excludes these items because these expenses are not reflective of ongoing operating results in the period incurred. These amounts arise from the Company’s prior acquisitions and have no direct correlation to the operation of the Company’s core business.

Facility closure costs. These charges consist of exit costs associated with a building lease that was abandoned in the first quarter of 2026 and primarily include lease expense, retirement of fixed assets, restoration costs and other moving costs. The Company excludes these charges because such charges are not directly related to ongoing business results and do not reflect expected future operating expenses.

Income tax adjustment. For purposes of internal forecasting, planning and analyzing future periods that assume net income from operations, the Company estimates a fixed, long-term projected tax rate of approximately 16 percent and 20 percent for 2026 and 2025, respectively, which consists of estimated U.S. federal and state tax rates, and excludes tax rates associated with certain items such as withholding tax, tax credits, deferred tax asset valuation allowance and the release of any deferred tax asset valuation allowance. Accordingly, the Company has applied these tax rates to its non-GAAP financial results for all periods in the relevant years to assist the Company’s planning.

On occasion in the future, there may be other items, such as significant gains or losses from contingencies, that the Company may exclude in deriving its non-GAAP financial measures if it believes that doing so is consistent with the goal of providing useful information to investors and management.

Forward-Looking Statements

This release contains forward-looking statements under the Private Securities Litigation Reform Act of 1995, including those relating to Rambus’ expectations regarding business opportunities, the Company’s ability to deliver long-term, profitable growth, product and investment strategies, and the Company’s outlook and financial guidance for the second quarter of 2026 and related drivers, and the Company’s ability to effectively manage market challenges. Such forward-looking statements are based on current expectations, estimates and projections, management’s beliefs and certain assumptions made by the Company’s management. Actual results may differ materially. The Company’s business generally is subject to a number of risks which are described more fully in Rambus’ periodic reports filed with the Securities and Exchange Commission. The Company undertakes no obligation to update forward-looking statements to reflect events or circumstances after the date hereof.

Contact

John Allen

Vice President, Accounting and Interim Chief Financial Officer

(408) 462-8000

[email protected]

Rambus Inc.

Condensed Consolidated Balance Sheets

(Unaudited)

| (In thousands) | March 31, 2026 |

December 31, 2025 |

||||||

| ASSETS | ||||||||

| Current assets: | ||||||||

| Cash and cash equivalents | $ | 134,324 | $ | 182,826 | ||||

| Marketable securities | 651,815 | 579,005 | ||||||

| Accounts receivable | 109,297 | 137,476 | ||||||

| Unbilled receivables | 24,869 | 25,209 | ||||||

| Inventories | 58,424 | 44,098 | ||||||

| Prepaids and other current assets | 21,151 | 20,202 | ||||||

| Total current assets | 999,880 | 988,816 | ||||||

| Intangible assets, net | 8,495 | 10,171 | ||||||

| Goodwill | 286,812 | 286,812 | ||||||

| Property and equipment, net | 113,278 | 113,051 | ||||||

| Operating lease right-of-use assets | 15,989 | 17,112 | ||||||

| Deferred tax assets | 101,484 | 105,542 | ||||||

| Other assets | 7,208 | 8,041 | ||||||

| Total assets | $ | 1,533,146 | $ | 1,529,545 | ||||

| LIABILITIES & STOCKHOLDERS’ EQUITY | ||||||||

| Current liabilities: | ||||||||

| Accounts payable | $ | 35,290 | $ | 35,915 | ||||

| Accrued salaries and benefits | 16,853 | 22,044 | ||||||

| Deferred revenue | 23,719 | 29,980 | ||||||

| EDA tools software licenses liability | 15,036 | 14,884 | ||||||

| Operating lease liabilities | 6,362 | 6,310 | ||||||

| Other current liabilities | 4,567 | 11,441 | ||||||

| Total current liabilities | 101,827 | 120,574 | ||||||

| Long-term operating lease liabilities | 17,042 | 18,671 | ||||||

| Long-term EDA tools software licenses liability | 16,014 | 20,908 | ||||||

| Other long-term liabilities | 5,023 | 4,967 | ||||||

| Total long-term liabilities | 38,079 | 44,546 | ||||||

| Total stockholders’ equity | 1,393,240 | 1,364,425 | ||||||

| Total liabilities and stockholders’ equity | $ | 1,533,146 | $ | 1,529,545 | ||||

Rambus Inc.

Condensed Consolidated Statements of Income

(Unaudited)

| Three Months Ended March 31, |

||||||||

| (In thousands, except per share amounts) | 2026 | 2025 | ||||||

| Revenue: | ||||||||

| Product revenue | $ | 88,002 | $ | 76,309 | ||||

| Royalties | 69,642 | 73,975 | ||||||

| Contract and other revenue | 22,545 | 16,380 | ||||||

| Total revenue | 180,189 | 166,664 | ||||||

| Cost of revenue: | ||||||||

| Cost of product revenue | 33,729 | 30,583 | ||||||

| Cost of contract and other revenue | 1,128 | 546 | ||||||

| Amortization of acquired intangible assets | 1,675 | 1,713 | ||||||

| Total cost of revenue | 36,532 | 32,842 | ||||||

| Gross profit | 143,657 | 133,822 | ||||||

| Operating expenses: | ||||||||

| Research and development | 50,229 | 42,620 | ||||||

| Sales, general and administrative | 31,670 | 28,058 | ||||||

| Total operating expenses | 81,899 | 70,678 | ||||||

| Operating income | 61,758 | 63,144 | ||||||

| Interest income and other income (expense), net | 7,151 | 4,856 | ||||||

| Interest expense | (279) | (377) | ||||||

| Interest and other income (expense), net | 6,872 | 4,479 | ||||||

| Income before income taxes | 68,630 | 67,623 | ||||||

| Provision for income taxes | 8,772 | 7,320 | ||||||

| Net income | $ | 59,858 | $ | 60,303 | ||||

| Net income per share: | ||||||||

| Basic | $ | 0.55 | $ | 0.56 | ||||

| Diluted | $ | 0.55 | $ | 0.56 | ||||

| Weighted-average shares used in per share calculations: | ||||||||

| Basic | 108,030 | 107,236 | ||||||

| Diluted | 109,716 | 108,628 | ||||||

Rambus Inc.

Supplemental Reconciliation of GAAP to Non-GAAP Results

(Unaudited)

| Three Months Ended March 31, |

||||||||

| (In thousands, except for per share amounts) | 2026 | 2025 | ||||||

| Cost of product revenue | $ | 33,729 | $ | 30,583 | ||||

| Adjustment: | ||||||||

| Stock-based compensation expense | (139) | (162) | ||||||

| Non-GAAP cost of product revenue | $ | 33,590 | $ | 30,421 | ||||

| Total operating expenses | $ | 81,899 | $ | 70,678 | ||||

| Adjustments: | ||||||||

| Stock-based compensation expense | (11,314) | (11,221) | ||||||

| Facility closure costs | (730) | — | ||||||

| Acquisition-related costs | — | (21) | ||||||

| Non-GAAP total operating expenses | $ | 69,855 | $ | 59,436 | ||||

| Operating income | $ | 61,758 | $ | 63,144 | ||||

| Adjustments: | ||||||||

| Stock-based compensation expense | 11,453 | 11,383 | ||||||

| Amortization of acquired intangible assets | 1,675 | 1,713 | ||||||

| Facility closure costs | 730 | — | ||||||

| Acquisition-related costs | — | 21 | ||||||

| Non-GAAP total operating income | $ | 75,616 | $ | 76,261 | ||||

| Net income | $ | 59,858 | $ | 60,303 | ||||

| Stock-based compensation expense | 11,453 | 11,383 | ||||||

| Amortization of acquired intangible assets | 1,675 | 1,713 | ||||||

| Facility closure costs | 730 | — | ||||||

| Acquisition-related costs | — | 21 | ||||||

| Income tax adjustment | (4,426) | (8,828) | ||||||

| Non-GAAP net income | $ | 69,290 | $ | 64,592 | ||||

| Non-GAAP diluted net income per share | $ | 0.63 | $ | 0.59 | ||||

| Weighted-average shares used in non-GAAP diluted per share calculation | 109,716 | 108,628 | ||||||

Rambus Inc.

Reconciliation of GAAP Forward-Looking Estimates to Non-GAAP Forward-Looking Estimates

(Unaudited)

| 2026 Second Quarter Outlook | Three Months Ended June 30, 2026 |

|||||||

| (In millions) | Low | High | ||||||

| Forward-looking operating costs and expenses | $ | 131.2 | $ | 127.2 | ||||

| Adjustments: | ||||||||

| Stock-based compensation expense | (15.7) | (15.7) | ||||||

| Amortization of acquired intangible assets | (1.5) | (1.5) | ||||||

| Forward-looking Non-GAAP operating costs and expenses | $ | 114.0 | $ | 110.0 | ||||