In part one of this three-part blog series, we discussed the low-cost attacks that target security chips such as protocol and software attacks, brute force glitch attacks, as well environmental attacks. In this blog post, we explore attacks executed by more sophisticated adversaries. These include side-channel attacks, clocking attacks, fault injection, and infrared emission analysis.

Sophisticated attackers – who might be working at the university level – can research the security model of your chip. Specifically, they can analyze your chip security using techniques such as side-channel attacks, clocking attacks, fault injections, and infrared emission analysis. Let’s take a closer look at these techniques below.

In this article:

Side-channel Attacks

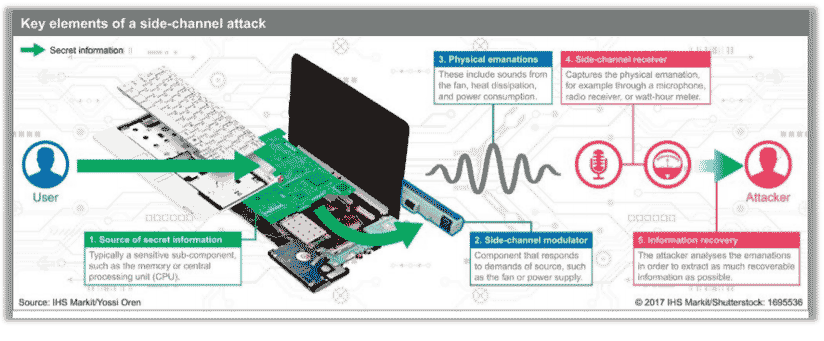

A side-channel attack describes a scenario where your adversary monitors the environment of your chip while it is performing a secure calculation. Attackers are looking for very small amounts of information leakage that is inevitably emitted from the chip while it is performing a secure calculation.

This information leakage could be power supply or electromagnetic noise caused by the circuit performing a secure calculation (the significance of power supply analysis was discovered by Rambus security researchers about 15 years ago). With this type of attack, a trace of a power supply is captured when a chip performs a secure calculation; for example, an encrypt or decrypt operation. An attacker that uses an extremely detailed statistical analysis of the power supply trace can discern parts of the secret key used in the calculation.

Countermeasures for these types of side-channels can be executed algorithmically within the cryptographic cores themselves. Interestingly, the degree of protection can be customized by the user.

Meaning, if a key is only going to be used 10,000 times before it is refreshed, a core that has 20,000 trace resistance would be sufficient. In other instances, a key is used more than a million times or even 10 million times. So, sometimes the degree of protection for power supply channel protection can be dialed in to accommodate the system and how often the keys are being used.

Clocking Attacks

Clocking attacks are quite similar to the environmental attacks we previously discussed in part one of our three-part blog series. In this type of attack, an adversary will take control of the clock going into the chip, or the clock being used by the chip, for purposes of performing the secure algorithm and secure computation. As with an environmental attack, every one of these digital circuits within the chip has been designed with a certain expectation of clock frequency and the range of clock frequency, the voltage, as well as the temperature. If an adversary can drive the clock beyond those extremes, aberrant behavior can be induced in the chip. This gives an adversary a foothold into attacking the chip and forcing it to reveal its secret data.

Overclocking countermeasures are quite similar to those used to protect against environmental countermeasures. For example, the ‘first to fail’ circuits are typically effective here – if the ‘first to fail’ circuits are receiving the same clock signals as the circuits performing the secure computation. This can be a straightforward method of preventing an adversary from overclocking the chip in some undetectable manner. Another way this can be prevented is to have wholly internal clock generators on a chip. This eliminates external clock sources which an adversary, with some relative sophistication of signal generators, can exploit to take over your chip. If the clock generator itself is fully on chip, it significantly complicates the ability of an attacker to seize control of the clock and attack your chip.

Fault Injection

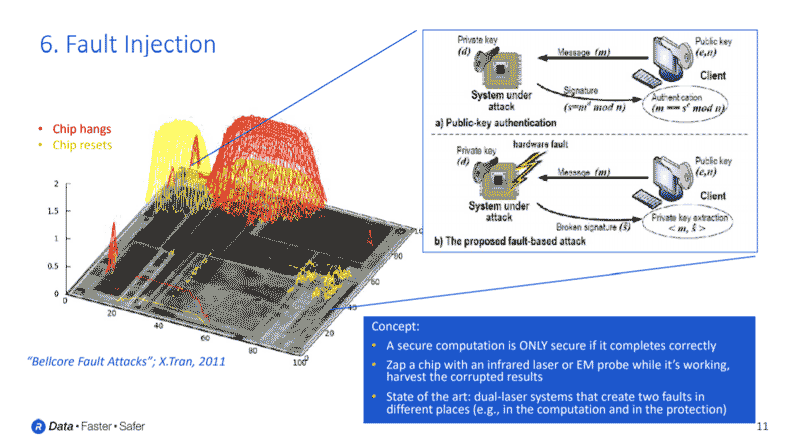

Fault injection is one of the most dangerous and effective attacks targeting secure chips. The concept of fault injection is similar to glitch injection. However, instead of trying to glitch the entire chip all at once, very precise lasers are aimed at the secure circuits within your chip. Or, precise electromagnetic probes are used to cause single bit flips at specific locations within your chip.

It should be noted that most of the well-known security algorithms, for example, AES, SHA and Elliptic Curve, are considered secure only if the algorithm completes correctly. If an adversary can cause the algorithm to fail during its normal computation, then portions of the secret key might now be present in some of the output data. With fault injection, your adversary is trying to intentionally cause a cryptographic circuit to fail and harvest the response. A subsequent statistical analysis that examines these incorrect responses can lead the adversary to information about the secrets you were trying to protect.

Typically, countermeasures for fault injection must be implemented algorithmically within the security core. So, there can be a great deal of error detection and redundancy included inside of a cryptographic core to ensure that a single bit flip will not cause the algorithm to proceed incorrectly or go undetected. In addition, there are some chip level countermeasures that can be included, as these types of fault injections are usually executed with spot lasers and infrared lasers that are dialed up to very precise spots and injected through the backside of a chip.

The backside of silicon tends to be transparent to infrared as there is no metallization to absorb any incoming lasers. This allows an adversary to raster a laser across the backside of your chip to find regions of sensitivity that, when tapped with the laser at just the right time, corrupt a secure calculation. At the chip level, there are some backside metallization techniques that can significantly complicate an adversary’s ability to inject laser-focused light into critical portions of your chip when the algorithm is executing.

Infrared Emission Analysis

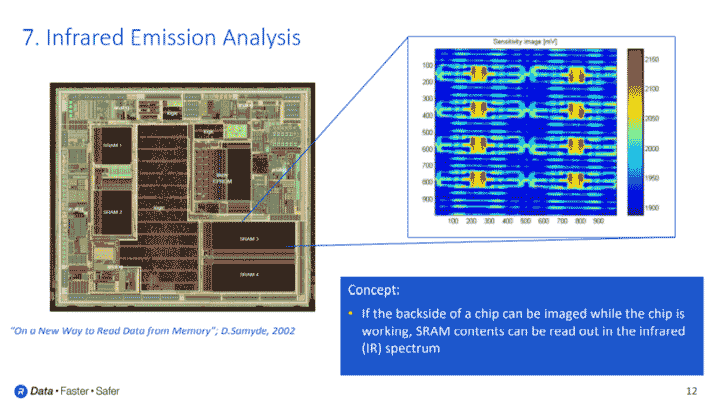

Similar to the clocking attacks we described earlier, infrared emission analysis also includes elements of fault injection techniques. Using infrared emission analysis, an adversary can advance a cryptographic computation to the precise point such that a secret key or critical piece of data is sitting in unprotected SRAM somewhere on the chip.

When SRAM circuits hold ones and zeros, they radiate infrared energies in different ways, depending on if the bit is holding a zero or a one. So, if your adversary is capable of walking an algorithm to the exact point where data is insecure, and then has time to collect the infrared data from the chip while the clock is paused, they can read out the data that was sitting unprotected in the SRAM.

Infrared emission analysis countermeasures include a lot of randomization. For example, secret data can be randomly split into different shares and stored in various sections of SRAM. Random offsets during the calculation can prevent an adversary’s ability to synchronize these attacks, which would be required to extract secret data. Since this class of attacks relies on capturing infrared energy from the backside of the silicon (similar to how fault injection inserted infrared energy), back side metallization can be used to thwart an adversary’s ability to analyze your circuits in this way.

Read more in this series:

Understanding Anti-Tamper Technology: Part 1

Understanding Anti-Tamper Technology: Part 3

Leave a Reply